OLMo - Accelerating the Science of Language Models

The figure is from https://github.com/allenai/OLMo. All rights reserved to its owner.

Paper link: https://allenai.org/olmo/olmo-paper.pdf

Homepage: https://github.com/allenai/OLMo

TL;DR

OLMo is short for Open Language Model. As the name indicates, the main contribution as the authors believe is the truely openness of the language models. Authors argue that many Large Language Models (LLMs) are closed source as the commercial incentive. Though there are few models that are “open”, such as LLaMa, Mistral 8\(\times\)7B, Mosaic, Falcon, Pythia suite, and BLOOM. Datasets used and training details are still hard to reproduce those models.

OLMo intends to close the gap. It opens the datasets (including tools for generating the datasets), training logs, intermediate checkpoints, evaluation tools and evaluation datasets. This can shed the light for more researchers to reproduce the model and conduct research to better understand the LLM, though the computational resource is still a major obstacle.

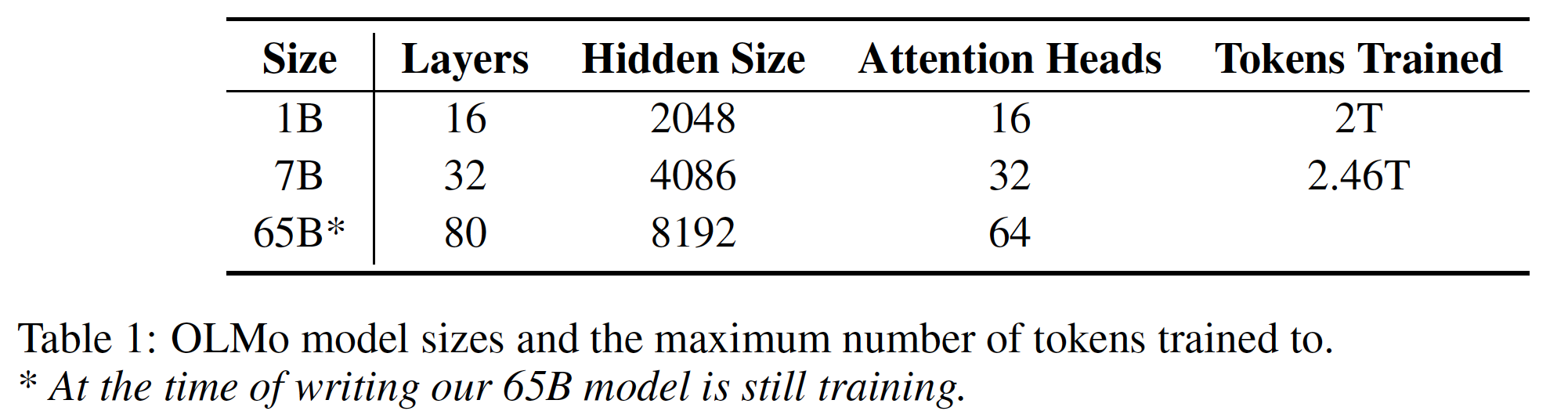

Architecture

Questions:

- What are the differences between encoder-decoder, encoder-only and decoder-only structrues?

This might be an answer for this question. https://vaclavkosar.com/ml/Encoder-only-Decoder-only-vs-Encoder-Decoder-Transfomer

Encoder-only such as BERT, takes text as input and output a sequence of embeddings. Those embeddings are used for downstream tasks, such as sequence classification. Vision Transformer (ViT) is also encoder-only model.

Decoder-only arthitecture takes sequence of text as input and output the next word or token. This is used in many text generation task.

Encoder-decoder has input as text and output will be the next word or token, which is then appended to the decoder-input. In between, cross-attention usually is applied to make information passing efficiently. The encoder-decoder attention ASR is one of the example. This is prefered if the input and output are different, for example, different modalities, different languages etc.