Equilibrium Matching Generative Modeling with Implicit Energy-Based Models

Homepage: https://raywang4.github.io/equilibrium_matching/

Paper link: https://arxiv.org/abs/2510.02300

Code: https://github.com/raywang4/EqM

Background

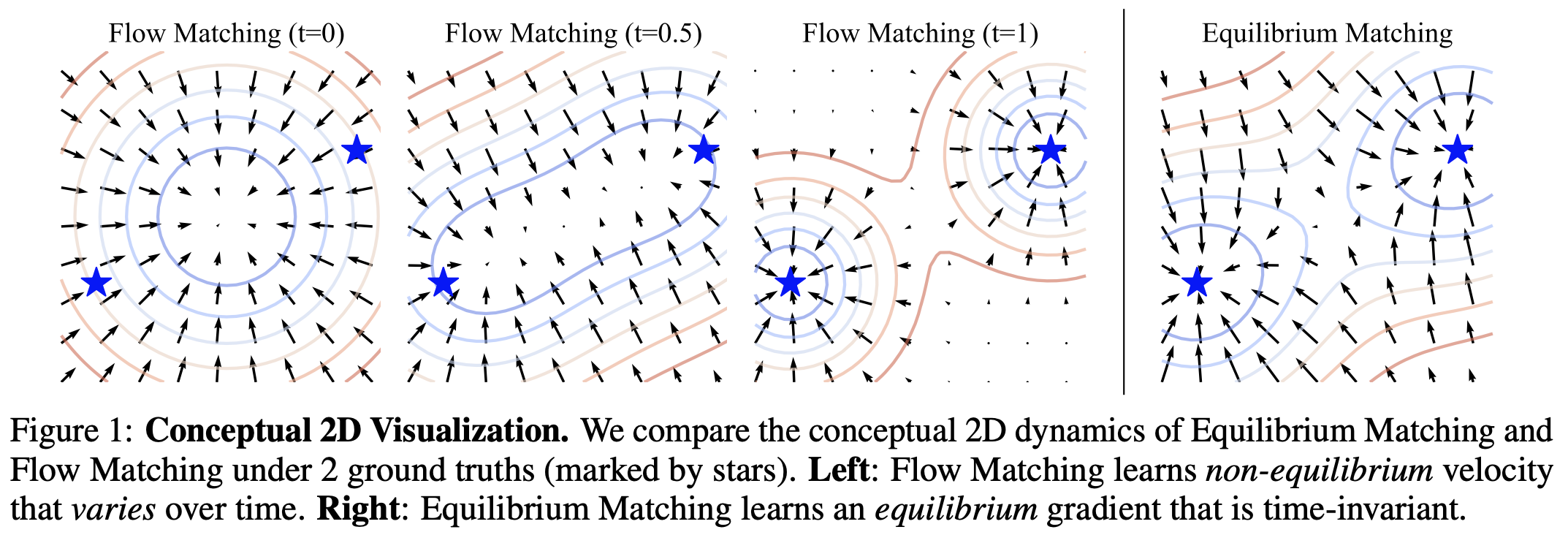

Diffusion and Flow Matching models learn non-equilibrium dynamics. Flow Matching (FM), for example, learns to match the conditional velocity along a linear path connecting noise and image samples. During sampling, Flow Matching starts from pure Gaussian noise and iteratively denoises the current sample using the velocity predicted by $f$

\begin{equation} \label{eq:FM_obj} L_{FM} = (f(x_t, t) − (x − \epsilon))^2. \end{equation}

Method

Equilibrium Matching (EqM) constructs an energy landscape in which ground-truth samples are on vallies of this landscape and noise is on the mountain points. It was constructed through adding noise to real data. From the paper

The objective function is very similar with FM. It is denoted as:

\begin{equation} \label{eq:EqM_obj} L_{EqM} = (f(x_\gamma) − (x − \epsilon)c(\gamma))^2. \end{equation}

To explain the above objective function, the paper first construct an energy landscape in which the target gradient at the ground-truth samples is zero, which means they are on vallies. It also defines a corruption scheme. $\gamma$ is defined as an interpolation factor sampled uniformly between 0 and 1. The model is to learn the path from the high energy points (which is noisy) to low energy point (which is real data). The intermediate interpolated sample is constructed as $x_{\gamma}=\gamma x + (1-\gamma) \epsilon $, where $epsilon$ is the gaussion noise. As stated in the paper

There are other details on how to construct the $c(\gamma)$ gradient magnitude function and how the model learns explicit energy.

Contrast to FM/diffusion, the inference part seems to be simpler. A ‘Gradient Descent Sampling’ method can be used.

All the details are worth reading in the paper.

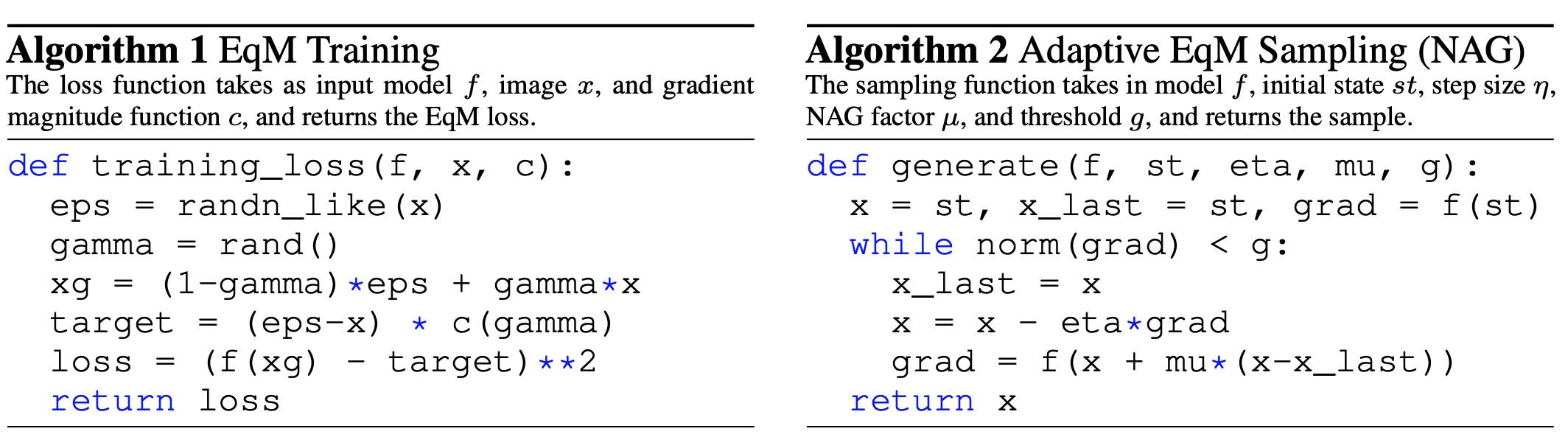

The training and inference pseudocode: