WAN Video Generative Models

Wan is a fully open-sourced model series from Alibaba Group.

Technical report: https://arxiv.org/pdf/2503.20314

Github: https://github.com/Wan-Video/Wan2.1 https://github.com/Wan-Video/Wan2.2

The work intended to open-source everything, including training process, data curation, training strategies, evaluation algorithms.

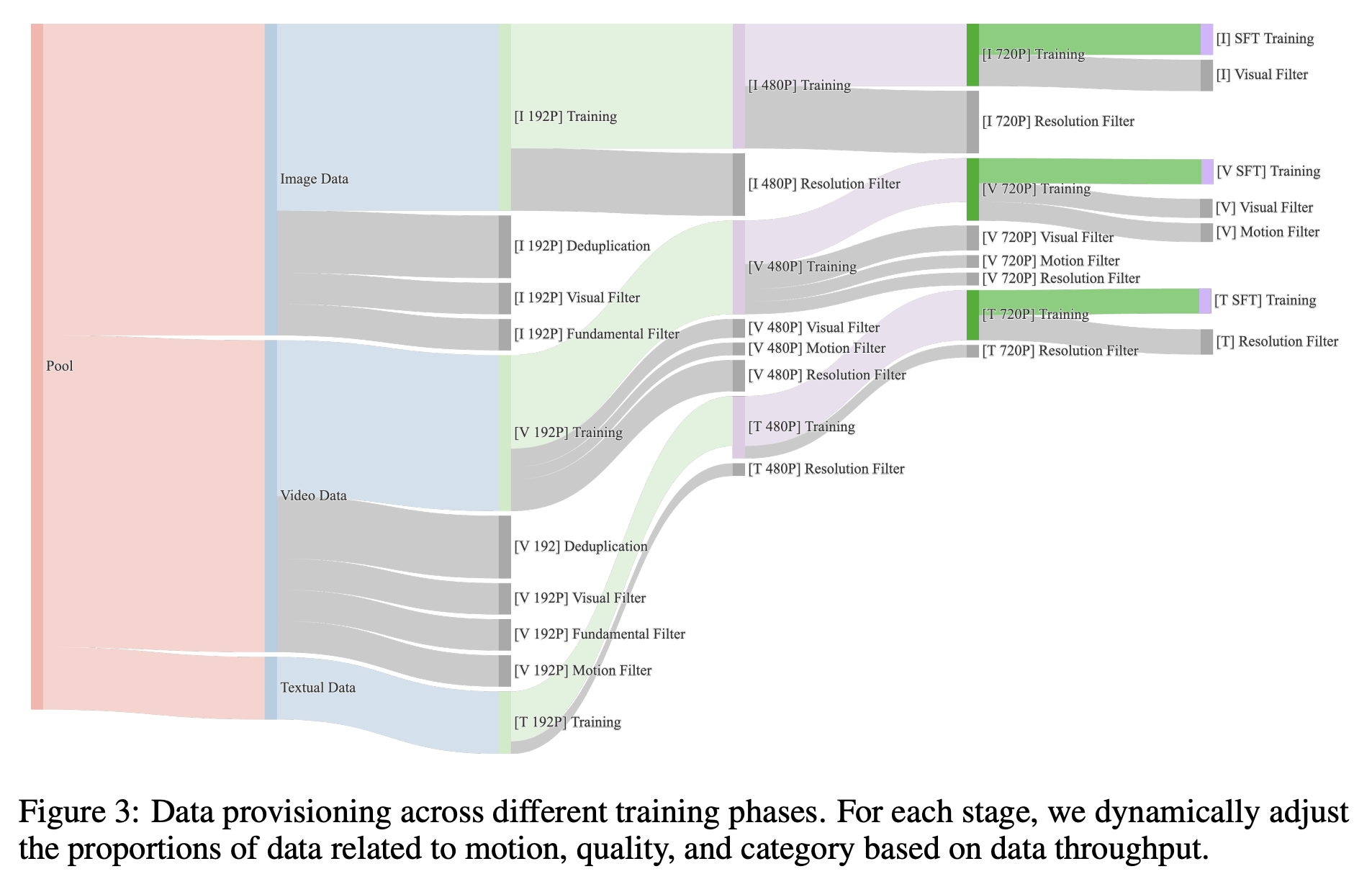

data

Model design

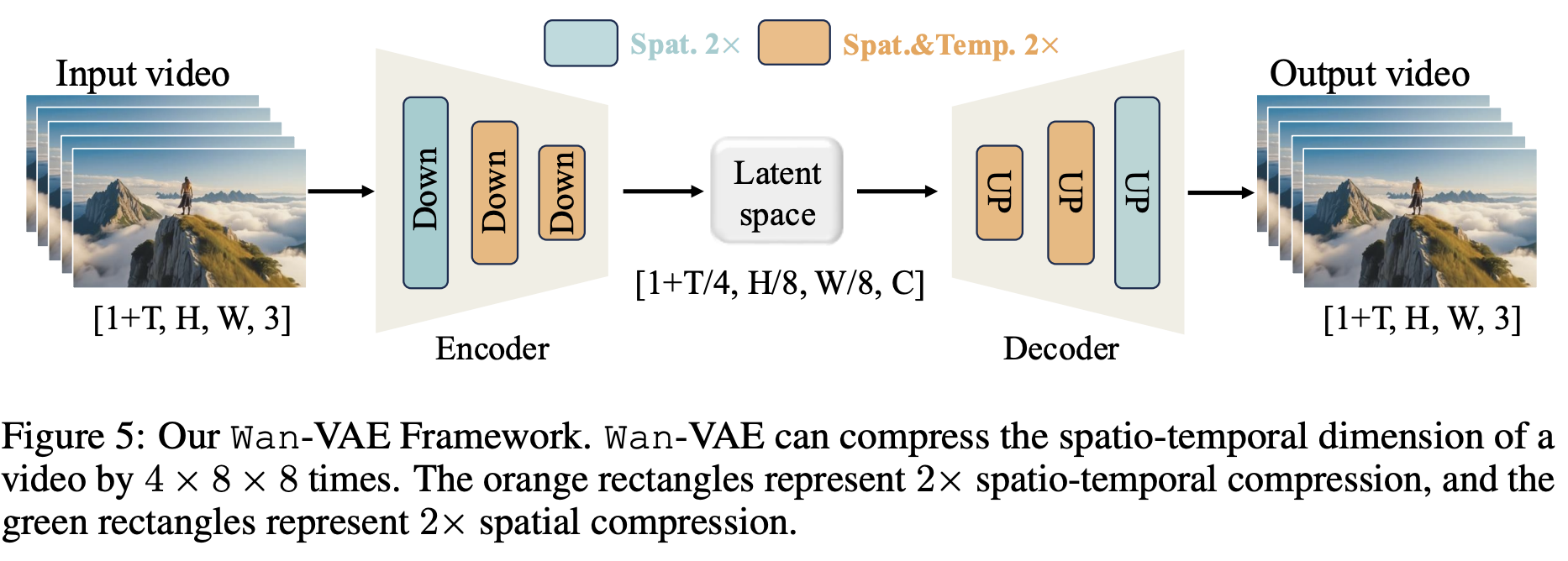

Wan-VAE

Wan-VAE is a 3D causal VAE architecture, meaning it models the temporal axis in a causal manner. It compresses the spatio-temporal dimensions of a video by a factor of $4 \times 8 \times 8$. A higher compression rate is desirable, as it reduces the burden on subsequent generative modeling. Both LTX-Video

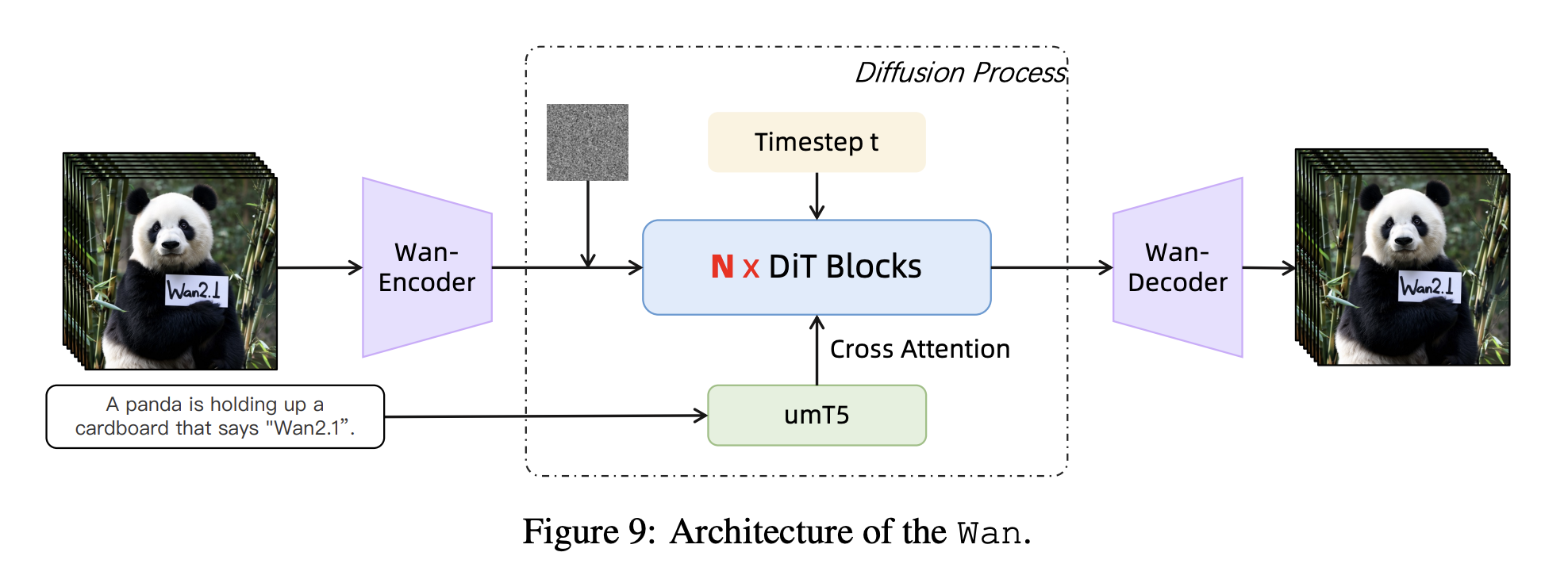

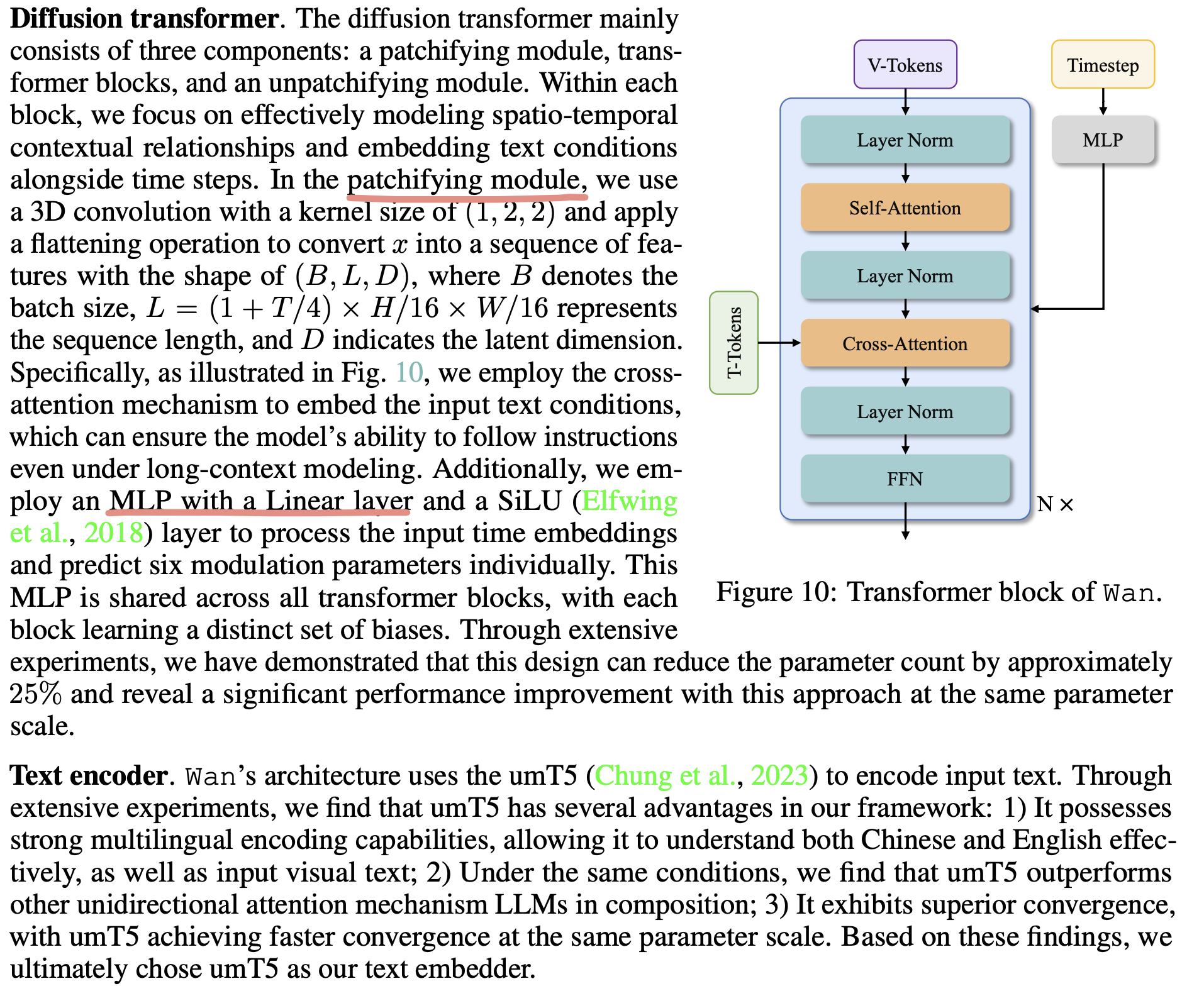

Video Diffusion Transformer

The video diffusion transformer is straightfoward. They used cross-attention to incorporate text tokens from the umT5 model

Training

pre-training

Image pre-training:

To mitigate these issues, we initialize the 14B model training through low-resolution (256 px) text-to-image pre-training, enforcing cross-modal semantic-textual alignment and geometric structure fidelity before progressively introducing high-res video modalities.

How to enfoce cross-modal semantic-textual alignment and geometric structure fidelity? It means by pre-training the text-to-image with low resolution, it results a model achieved ‘cross-modal semantic-textual alignment and geometric structure fidelity’.

Image-video joint training. Following large-scale 256 px text-to-image pre-training, we implement staged joint-training with image and video data through a resolution-progressive curriculum. The training protocol comprises three distinct stages differentiated by spatial resolution areas: (1) In the first stage, we conduct joint training with 256 px resolution images and 5-second video clips (192 px resolution, 16 fps). (2) In the second stage, we initiate spatial resolution scaling by upgrading both image and video resolutions to 480px while maintaining a fixed 5-second video duration. (3) In the final phase, we escalate spatial resolution to 720px for both images and 5-second video clips.

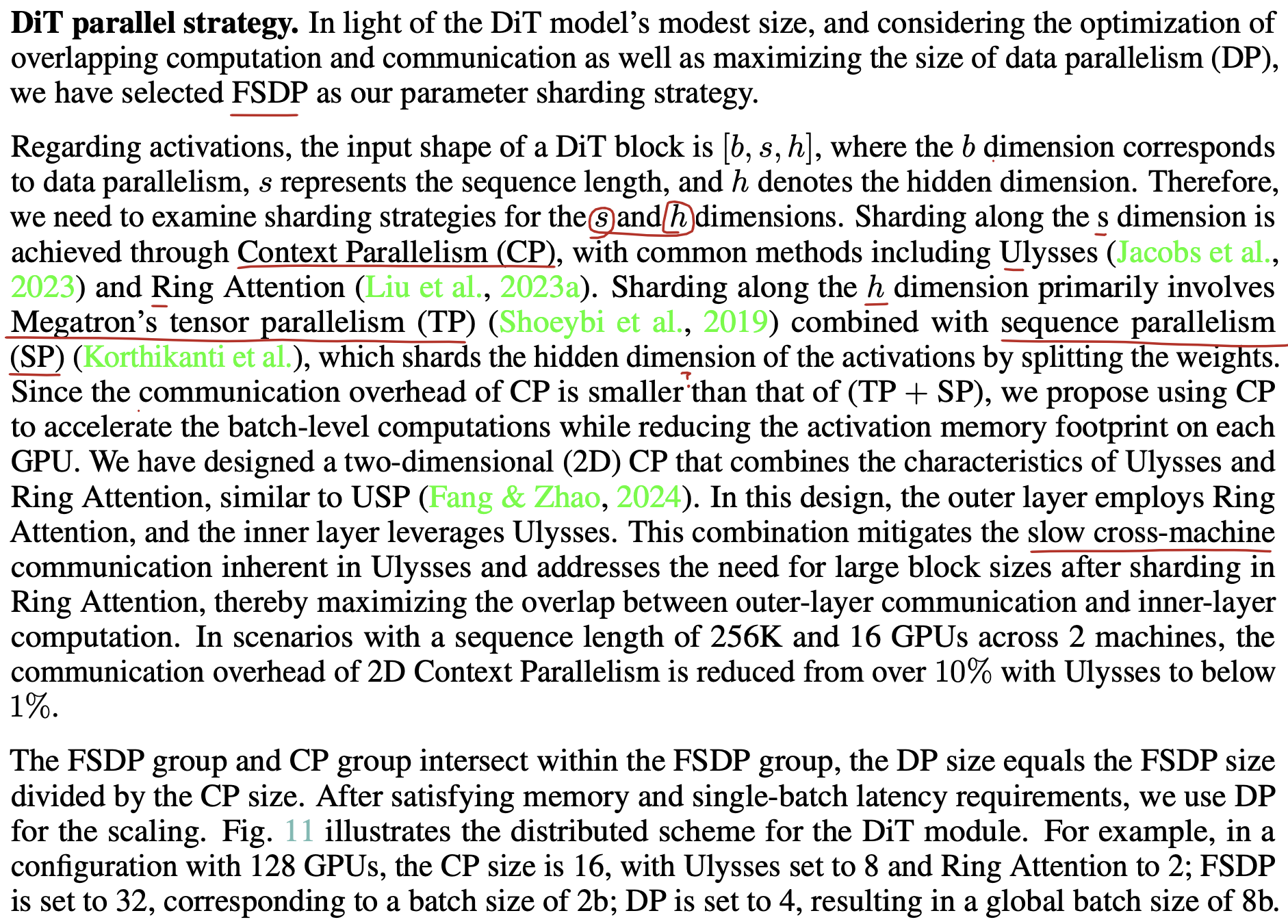

parallel strategy

In the large model training, parallelism is a key.