Masked Audio Generation using a Single Non-Autoregressive Transformer

Figure is from https://pages.cs.huji.ac.il/adiyoss-lab/MAGNeT, all rights reserve to its owner

Paper link: https://arxiv.org/abs/2401.04577

Homepage: https://pages.cs.huji.ac.il/adiyoss-lab/MAGNeT

D1

The overall idea of the MagNET is that gradually generate discrete tokens given already generated tokens. Those tokens are then converted into audio waveform using pretrained codecs. The mask and generate idea has been proposed in image generation before, e.g the MaskGIT

Background

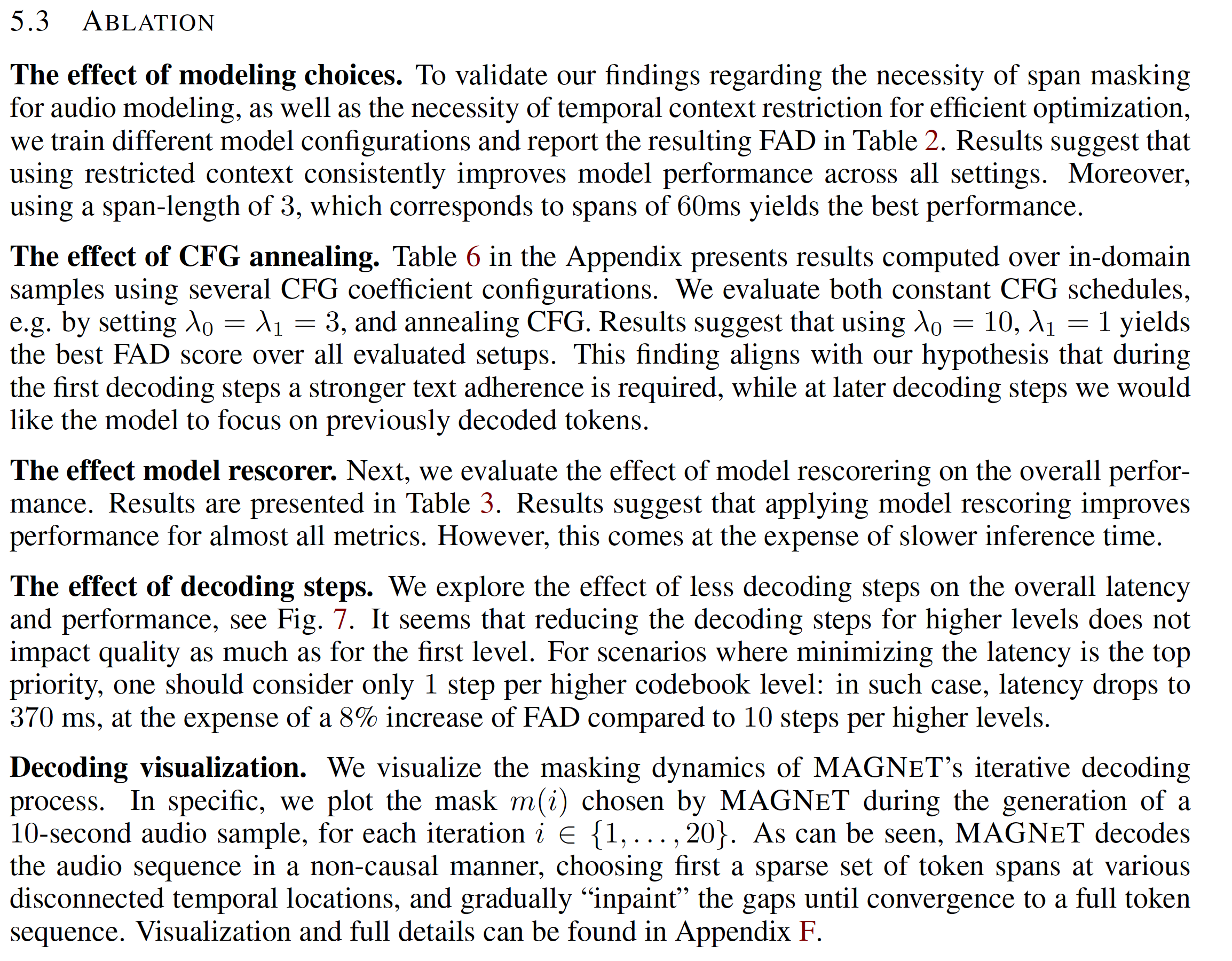

MaskGIT

Above figure gives a easy-to-understand pipeline about the MaskGIT

The pipeline has two stages. First stage is called tokenization and it is to train a tokenizer which converts the original image into discrete tokens. It usually uses the encoder-decoder structure and auto-encoder is usually used. The second stage is refered as masked modeling. During inference, it starts from a blank canvas with all the tokens masked out. The four steps used:

- Predict: predict probabilities of token codebook,

- Sample: sample token based on its prediction probabilities,

- Mask schedule: compute the number of tokens to mask again,

- Mask.

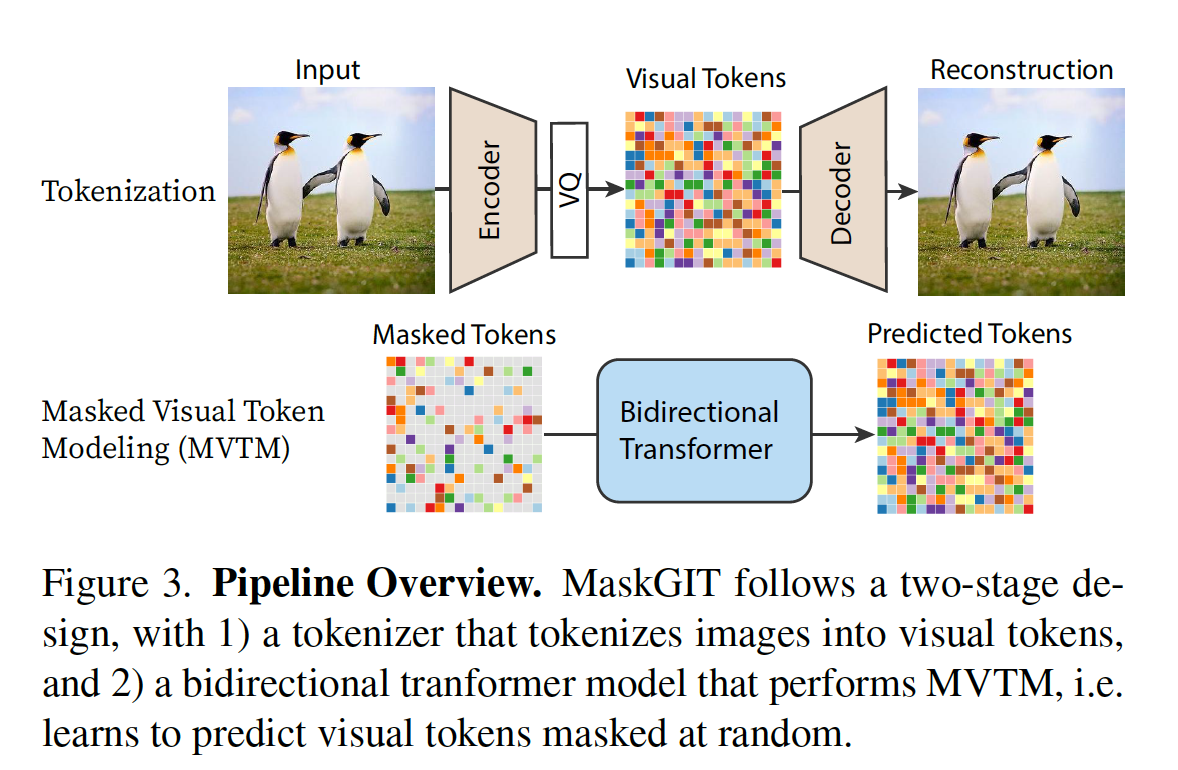

Text condition A follow-up paper of MaskGIT is Muse

Audio representation Two types of representation are prevaling. The first type is dicrete representation (token). This type of representation are applied in many neural networks based codec, such as EnCodec. Specifically, auto-encoder is used to map waveform into a latent space and Residual Vector Quantization (RVQ) is used to convert continuous latent representation into discrete tokens. Those discrete tokens can be manipulated (e.g. transiting) and then can be converted to waveform through decoder component of the auto-encoder.

Method

Authors find it is practically not feasible to naively apply methods such in MaskGIT and Muse into the RVQ represented audio tokens. Authors hypothesize it is due to:

- individual token in audio shares information with adjacent tokens,

- the first code in codebook usually encode most information and the temporal context of the codebooks at levels greater than one is generally local and influenced by a small set of neighboring tokens,

- sampling from the model at different decoding steps requires different levels of diversity with respect to the condition. (I don't understand how this aspect is special for audio modality)

Masking span instead of single token. The author’s solution one is applying spans of tokens as the atomic building block of the masking scheme, rather than indivisual one.

restricting context. The quantized codebooks later than the first one heavily depend on previous codebooks rather than surrounding tokens. The autho put prior knowledge (inductive bias) into the attention map of those codebooks attention weights inside the transformer to limit the context modeling, which helps narrow down the optimization process.

rescoring. Author inspired from Automatic Speech Recognition (ASR) decoding, that usually generates several decoding candidates and resore them using another model. Authors propose to use an external model to evaluate and precit new set of probabilities of each token spans. Then weighted sum of both MagNET’s probabilities and rescorer model’s probabilities is used. More specifically, MUSICGEN and AudioGen are used as rescorering models.

Classifier-free guidance annealing. Authors decreases the impactness of the condition while generating audio, which means the tokens generated first will be more impacted by condition. Similar as Calssifier-Free Guidance (CFG) in diffusion-based model, during training, authors optimize the model both conditionally and unconditionally, while at inference, distribution as a linear combination of the conditional and unconditional probabilities are used to sample the tokens. The CFG coefficient is specificied in Eq. (6).

Comments

Authors make an attempt to use the mask token modeling (an analog of mask language model in NLP) in audio generation and show it works. The work is novelty in audio genration, though similar ideas were explored in image generation. Many of the developments are quite empirical, thus the ablation study is critical. The exteral rescorer models using MUSICGEN and AudioGen makes the model complicated. Does it really necessary to be included in the paper? Maybe put it into the appendix is a better way.