Read Robust Speech Recognition via Large-Scale Weak Supervision

Paper link: https://arxiv.org/pdf/2212.04356.pdf

Homepage: https://github.com/openai/whisper

D1

Motivation

From the introduction, the motivation of this paper is to how to scale up the data usage with a supervised mannual.

Unsupervised pre-training techniques like Wav2Vec 2.0

Authors reason there are drawbacks of the previous unsupervised pre-training techniques:

- previous unsupervised pre-training focus on trainig an generic audio encoder, but not the decoder.

- decoder is task specific.

- there are risk of fine-tuning. Pre-trained model within a training data may not generalize well to held-out data

as some of patterns are brittle and spurious and don't generalize to other datasets and distribution. - this work is on studying the capabilities of large-scale supervised pre-training for speech recognition.

The reasoning in the introduction is not that sound for me, as this seems to be general question for all unsupervised pre-training. It is reasonable to train with supervised training with the same amount of data compared with unsupervised training. Given a task with large amount of labeled data, supervised training is still prefered.

Models and tasks

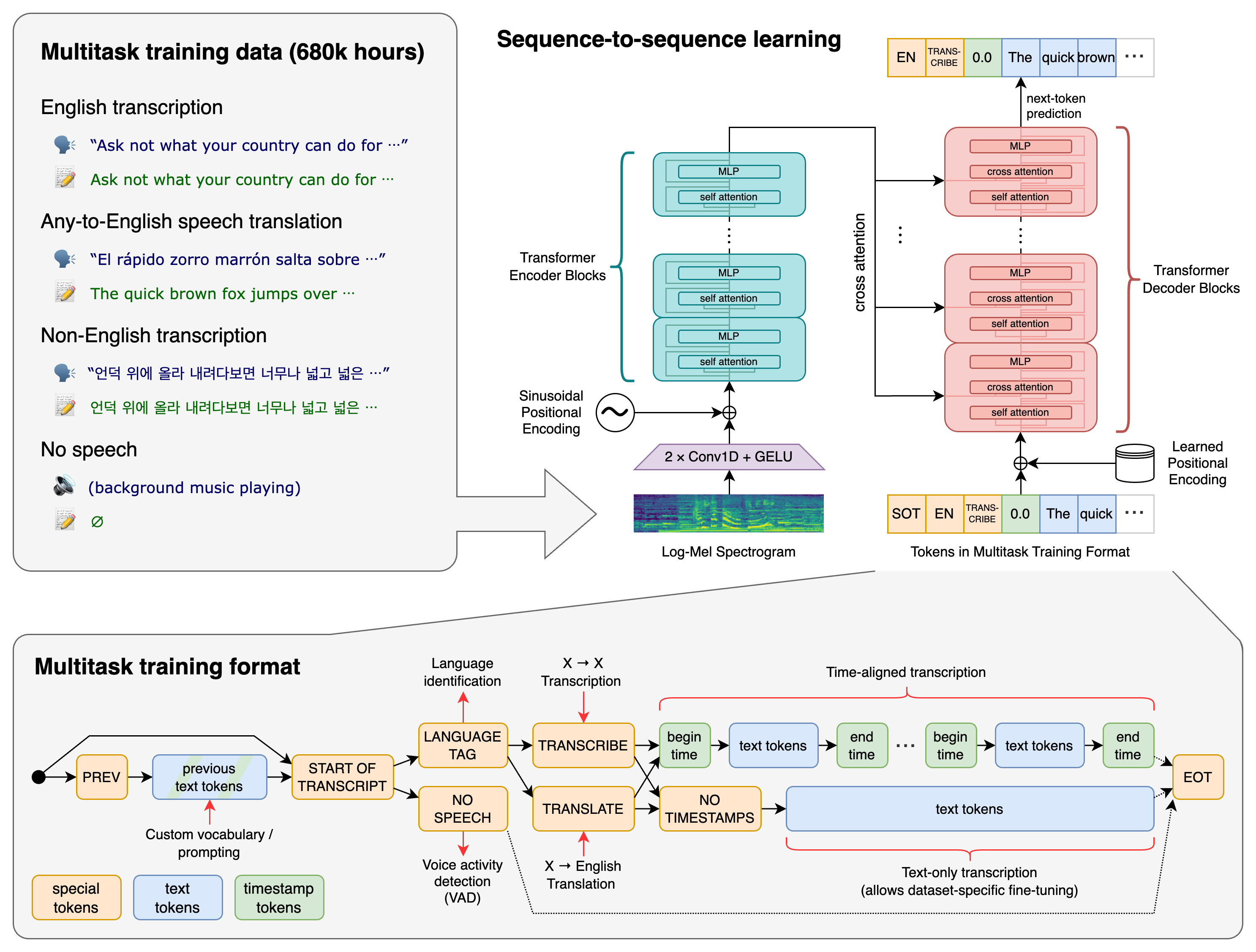

The overview figure (figure 1 in paper) provides a good explanation of the model and multi-task training procedure.

Model Architecture

The structure of the model is a typical Attention Encoder-Decoder (AED) model, which starts from the listen, attend and spell paper

Multi-task format

Authors include tasks: transcription, translation, voice activity detection, alignment, and language identification. But not speaker identification. The precedure is:

- include previous transcription?

- begin token <|startoftranscript|>

- predict language target

- if there is no speech, add <|nospeech|> token

- specify task: <|transcribe|> or <|translate|>

- whether to predict timestamps: <|notimestaps|> for not predicting timestamps

- after decoded, add <|endoftranscript|>

Feel like a pseudo code block is good to be included in the paper.

D2

Zero-shot

The main target of this paper is to show with large amount of data that is various in many aspect, speech recognition system can generalize well, which mean it performs well in zero-shot situation.

About the zero-shot claim

I still find the zero-shot setting is not really 'zero-shot'. This zero-shot is some what strange for me. The 'zero-shot' here is more about a specific dataset for testing is not included in the training. But this does not mean there is not overlap between the distribution of the specific dataset with training dataset.

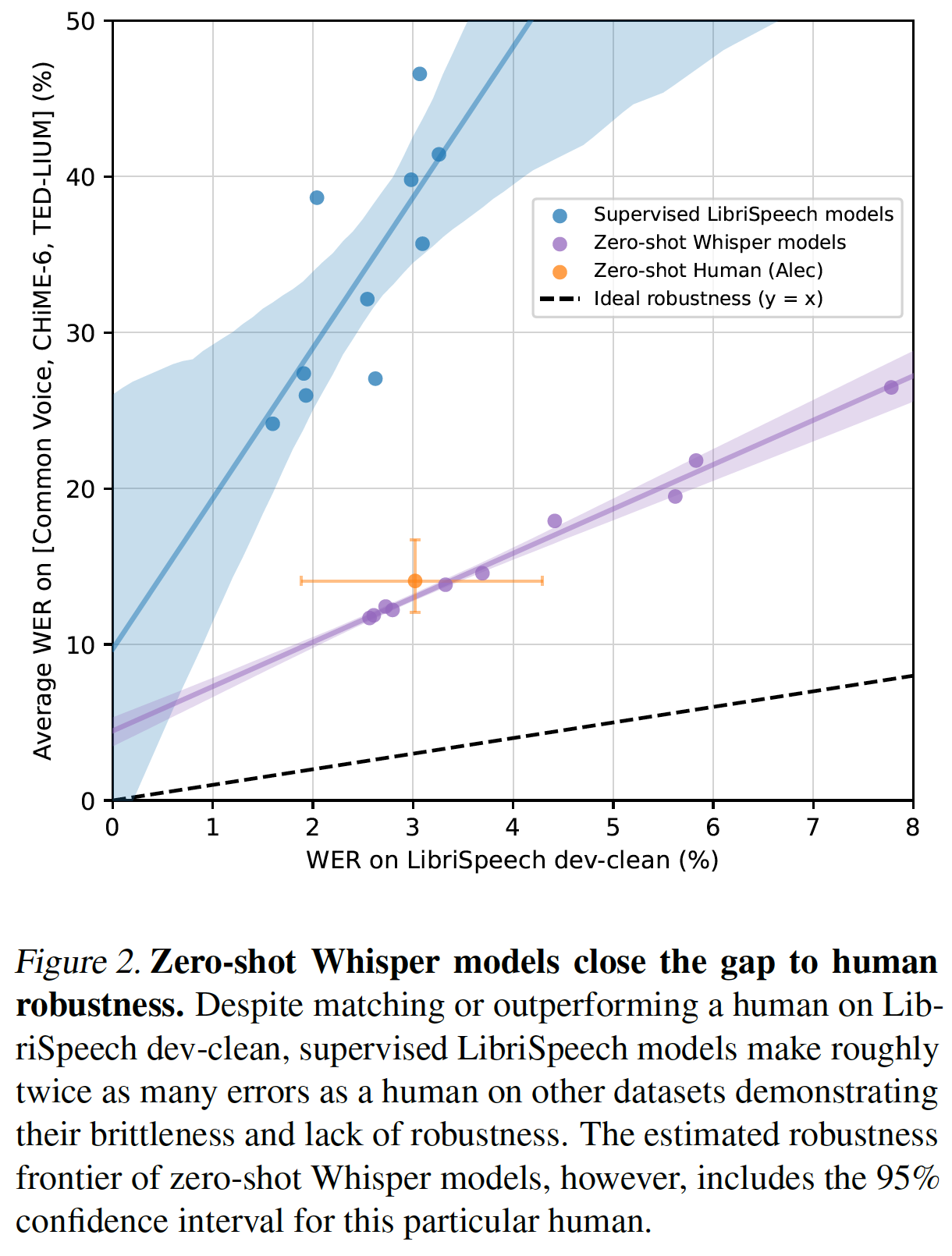

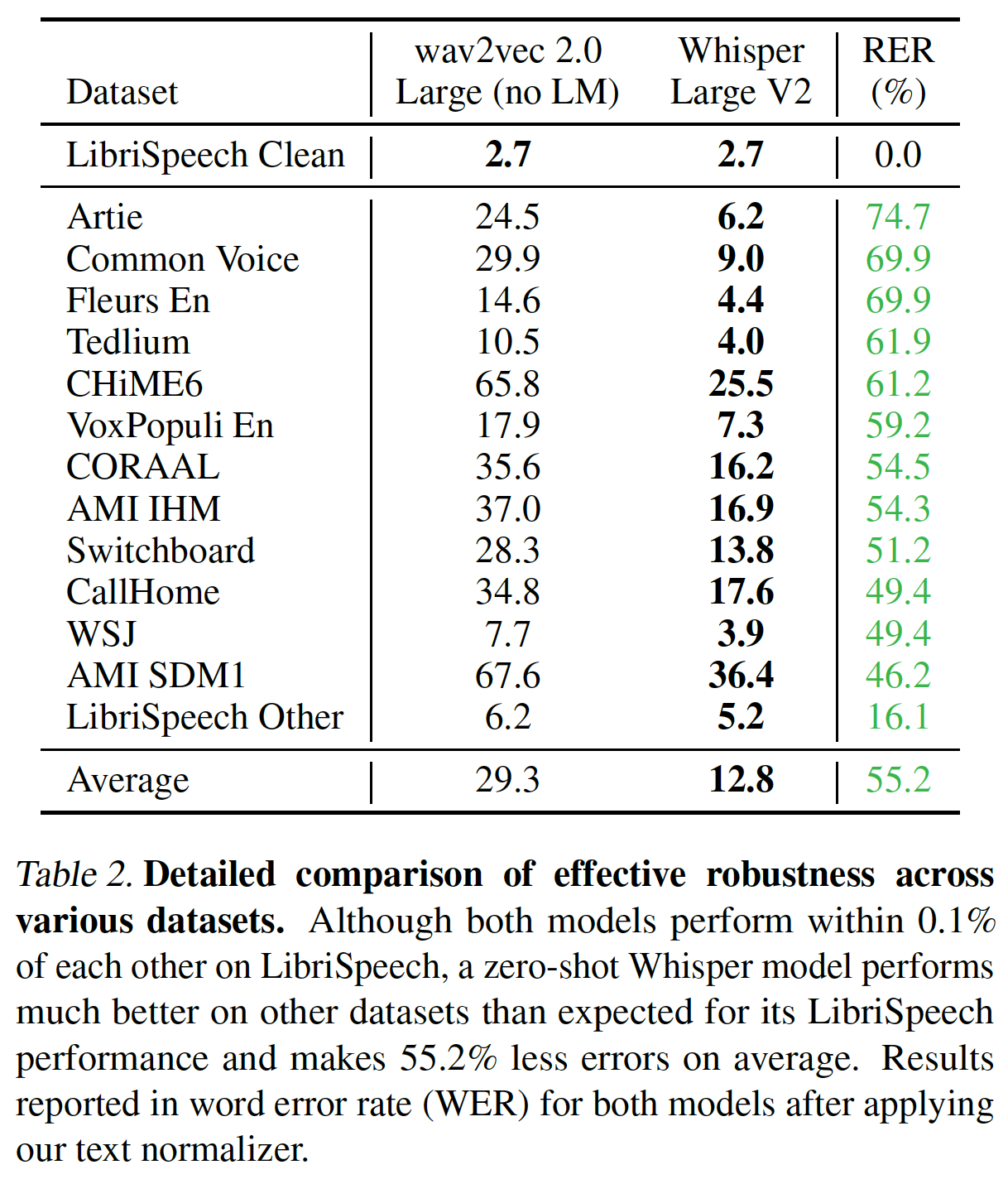

The above two figures summarize the zero-shot performance. Authors use the overall robustness, that is average performance across many distributions/datasets, and effective robustness

Multilingual Another finding they have is in multiligual speech recognition performance. The whisper is not superior in Multilingual LibriSpeech and VoxPopuli. Author find a strong squared correlation coefficient of 0.83 between the log of the word error rate and the log of the amount of training data per language, which results in an estimate that WER halves for ever 16x increase in training data. Many of the largest outliers in terms of worse than expected performance are languages that have unique scripts and are more distantly related to the Indo-European languages such as Hebrew (HE), Telugu (TE), Chinese (ZH), and Korean (KO). This observation is interesting but I found is empirical. Authors suspect the reason is:

These differences could be due to a lack of transfer due to linguistic distance,our byte level BPE tokenizer being a poor match for these languages, or variations in data quality.

Translation Authors find the translation from lanugages to english is better than prior work like Maestro, mSLAM, and XLS-R in the subset of CoVOST2. The attribute this to the 68 thousand hours of x->en translation data for these languages in pre-training dataset which, although noisy, is vastly larger than the 861 hours of training data fro x->en translation in CoVoST2.

The squared corrlation coefficient between the amount of translation training data per language and the resulting zero-shot BLEU score on Fleurs is much lower than the 0.83 observed for speech recogntiion and is only 0.24.

Language Identification Fleurs dataset is used. The zero-shot performance of Whisper is not competitive with prior supervised work and underperforms the supervised SOTA by 13.6%. This is partly due to there are 20 out of 102 languages in Fleurs are not in the training data of Whisper. The best whisper model achieves 80.3% accuracy on the other 82 languages.