Read Harnessing the power of llms in practice A survey on chatgpt and beyond

Paper link: https://arxiv.org/pdf/2304.13712.pdf

Homepage: https://github.com/Mooler0410/LLMsPracticalGuide

This is a survey paper and it’s included because it gives good overview of the current Large Language Model (LLM).

D1 practical guide for models

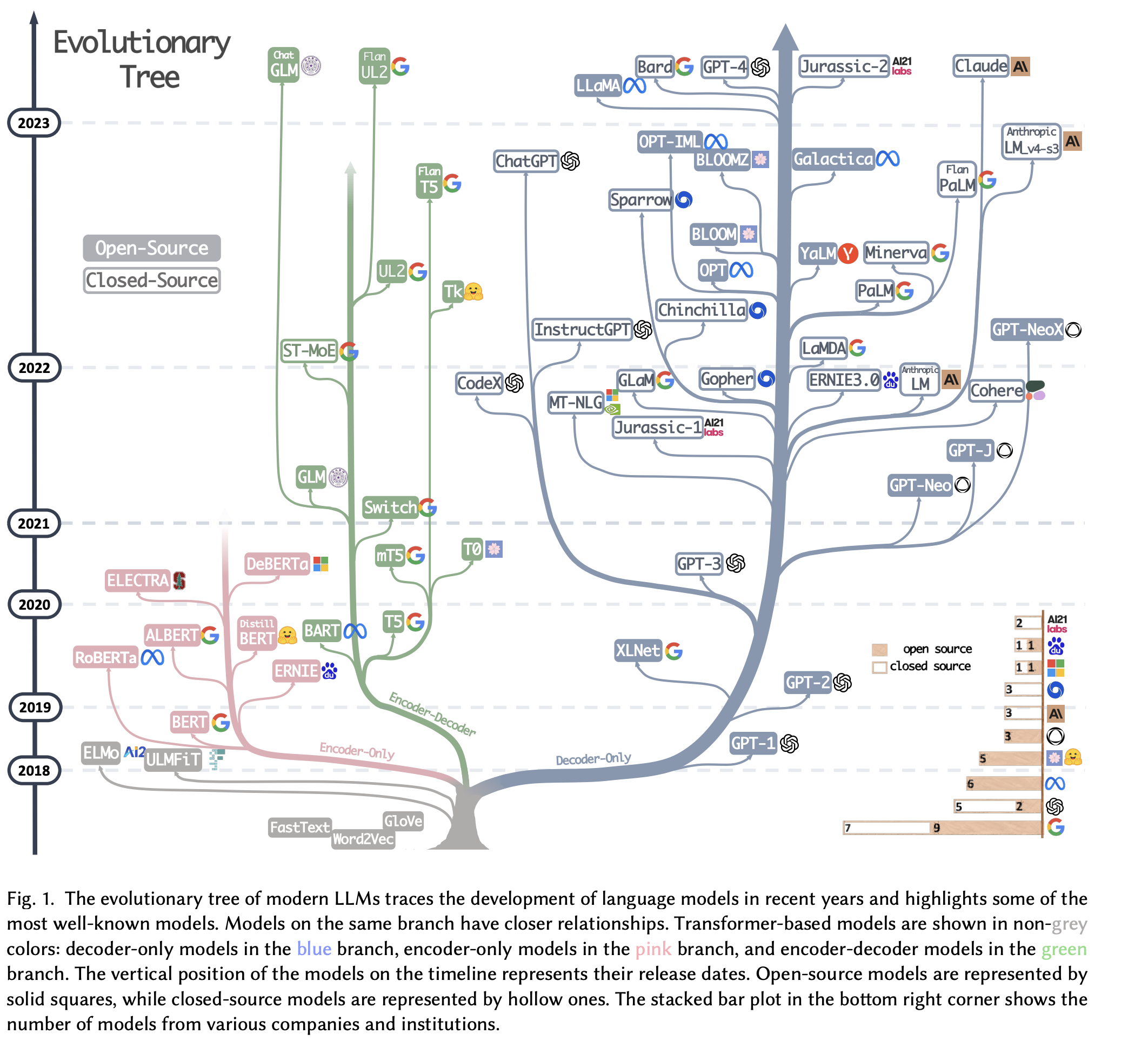

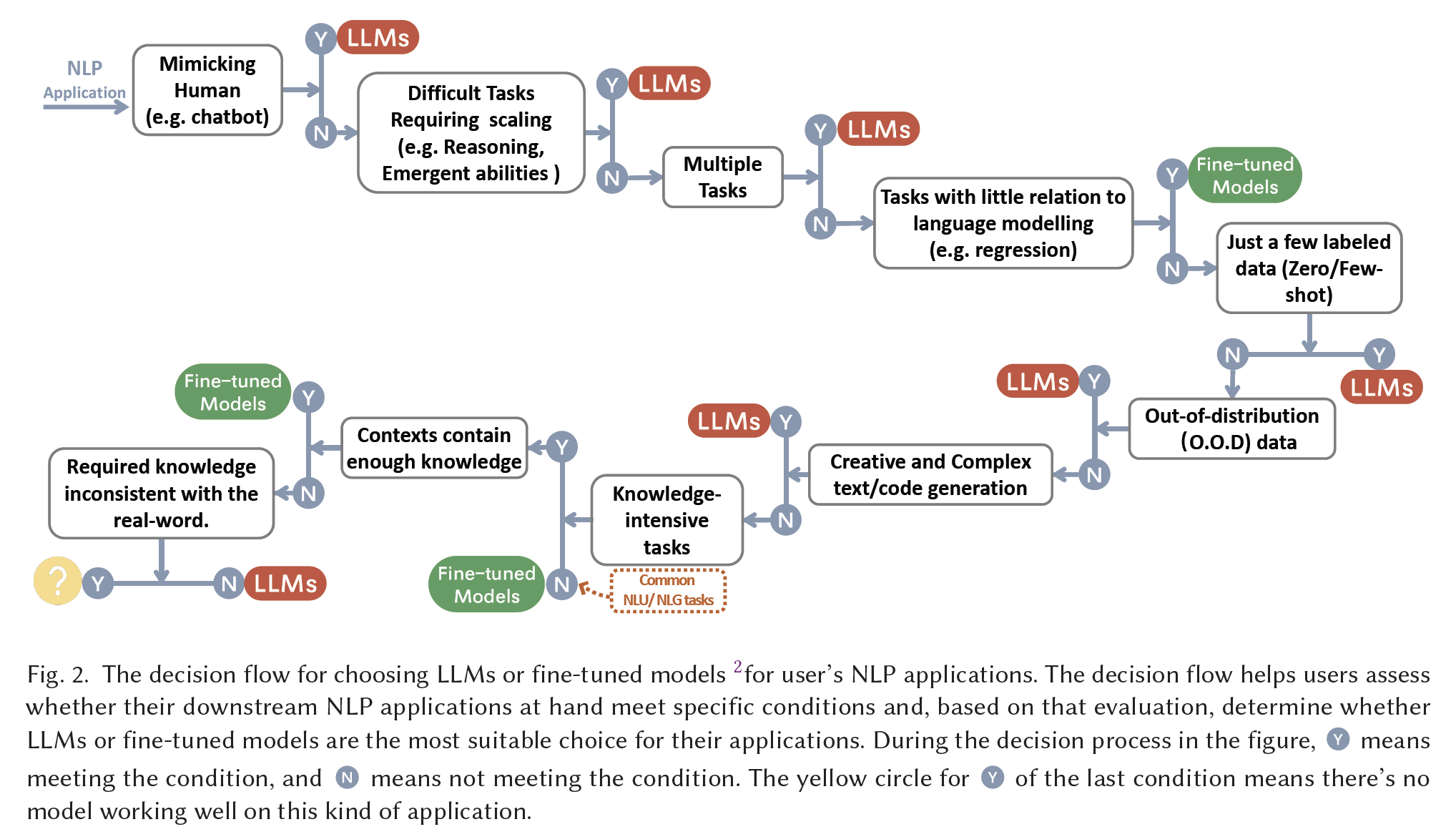

Authors divided the LLMs into two categories: encoder-decoder or encoder-only, decoder-only. The observation from the author is that the decoder-only is dominant in the field, and the encoder-only models gradually fade away after BERT.

Some models:

- BERT

, proposed the Masked Language Models (MLM) that predict the masked region considering the surrounding context. - GPT-3

, using the Autoregressive alanuage Models (predict next word using preceding words), demonstrated reasonable few-/zero-shot performance via prompting and in-context learning.

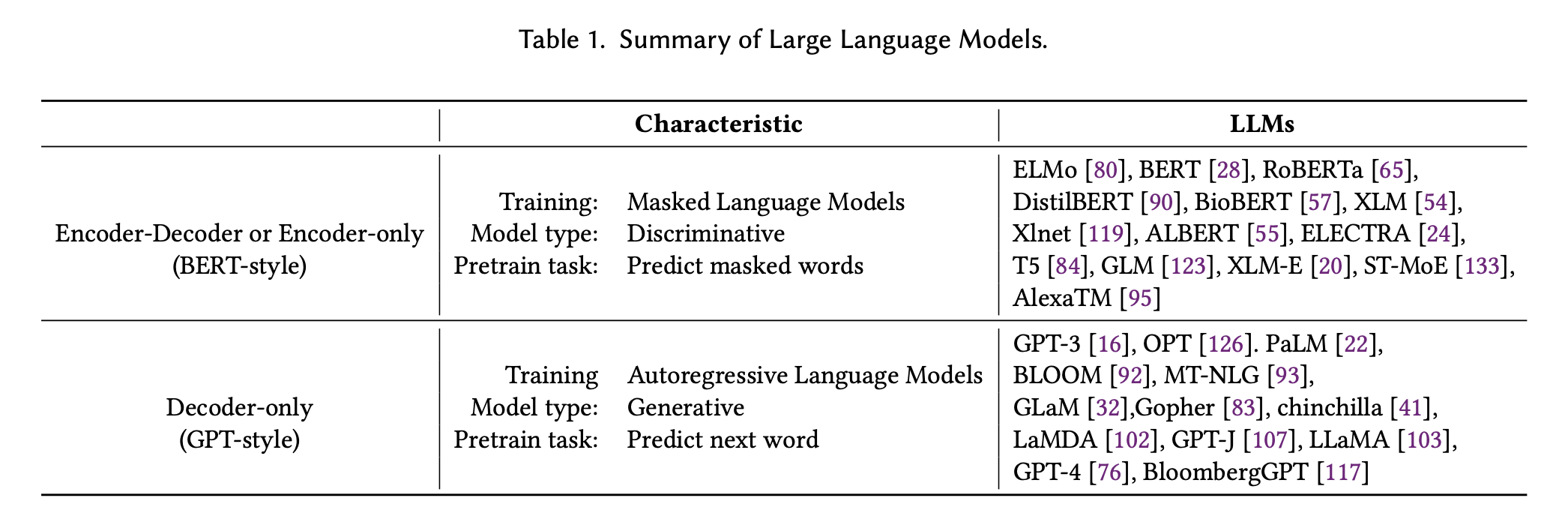

the definitions of them are proposed as: LLMs are huge language models pretrained on large amounts of datasets without tuning on data for specific tasks; fine-tuned models are typically smaller language models which are also pretrained and then further tuned on a smaller, task-specific dataset to optimize their performance on that task.

footnote

From a practical standpoint, we consider models with less than 20B parameters to be fine-tuned models. While it’s possible to fine-tune even larger models like PlaM (540B), in reality, it can be quite challenging, particularly for academic research labs and small teams. Fine-tuning a model with 3B parameters can still be a daunting task for many individuals or organizations.D2

practical guide for data

LLMs at least have two stages: pre-training and fine-tuning stage.

- pretraining data: this data contains large amount of categories with huge amount of data for pretraining. It includes books, articles, and websites. The quality, quantitative and diversity of this data inflence the performance of LLMs significantly.

the selection of LLMs highly depends on the components of the pretraining data ... code execution and code completion capabilities of GPT-3.5 (code-davinci-002) are amplified by the integration of code data in its pretraining dataset. In brief, when selecting LLMs for downstream tasks, it is advisable to choose the model pre-trained on a similar field of data. - fine-tuning data: the author further divided it into three scenario:

- zero annotated data: there is not much information in the manuscript. It just gives praise to the zero-shot performance of LLMs.

- few annotated data: Few annotated data is quite useful. The few-shot examples are directly incorporated in the input prompt of LLMs. The

in-context learningis effective to make the LLMs to generalize to the task. - abundant annotated data: task specific. Both fine-tuned models and LLMs can be considered. Fine-tuning the model might be cheaper.

practical guide for NLP task

Fine-tuned models generally are a better choice than LLMs in traditional NLU tasks, but LLMs can provide help while requiring strong generalization ability.

Tasks:

- text classification, datasets: IMDB, SST. Toxicity detection (fine-tuned models are far more better than LLMs), CivilComments. Perspective API is still one of the best for detecting toxicity. This API is powered by a multilingual BERT-based model.

- natural language inference, datasets: RTE, SNLI (fine-tuned models are better). For question answering (QA), datasets: SQuADv2, AuAC, fin-tuned moels are better. CoQA LLs performs as well.

- information retrieval (IR), KKNs are not well exploited. Ranking thousands of candidate texts seems not easy fo LLMs.

- low-level intermediate tasks like named entity recognition (NER). Authors predict those intermediate tasks may be skipped by LLMs as LLMs can achieve high-level tasks without the help of those intermediate results.

- tasks that has out-of-distribution and sparsely annotated data like Adversarial NLI (ANLI), miscellaneous text classification, LLMs are better.

practical guide for generation task

LLM can perform summarization and translation tasks that generate results human perfer. LLMs can perform competent translation, particularly good at translating some low-resource language texts to English texts, such as in the Romanian-English translation of WMT’16…

- good at open-ended generation, like news article writing

- good at code synthesis, like text-code generation, such as HumannEval

and MBPP, or for code repairing, such as DeepFix, LLM perform well. GPT-4 can even pass 25% problems in Leetcode , which are not trivial for most human coders. With training on more code data, the coding capability of LLMs can be improved further [PaLM] .

PaLM

Large language models have been shown to achieve remarkable performance across a variety of natural language tasks using few-shot learning, which drastically reduces the number of task-specific training examples needed to adapt the model to a particular application. To further our understanding of the impact of scale on few-shot learning, we trained a 540-billion parameter, densely activated, Transformer language model, which we call Pathways Language Model (PaLM). We trained PaLM on 6144 TPU v4 chips using Pathways, a new ML system which enables highly efficient training across multiple TPU Pods. We demonstrate continued benefits of scaling by achieving state-of-the-art few-shot learning results on hundreds of language understanding and generation benchmarks. On a number of these tasks, PaLM 540B achieves breakthrough performance, outperforming the finetuned state-of-the-art on a suite of multi-step reasoning tasks, and outperforming average human performance on the recently released BIG-bench benchmark. A significant number of BIG-bench tasks showed discontinuous improvements from model scale, meaning that performance steeply increased as we scaled to our largest model. PaLM also has strong capabilities in multilingual tasks and source code generation, which we demonstrate on a wide array of benchmarks. We additionally provide a comprehensive analysis on bias and toxicity, and study the extent of training data memorization with respect to model scale. Finally, we discuss the ethical considerations related to large language models and discuss potential mitigation strategies.knowledge intensive tasks

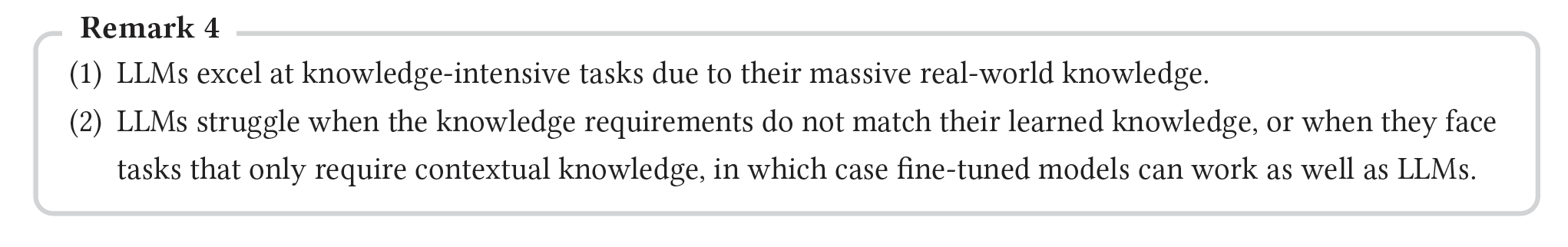

Use: tasks that need real-world knowledge, like question-answering, multitask language understanding etc.

No use: tasks requiring knowledge different from one learned by LLMs or only contextual knowledge during inference is needed.

D3

Scaling

The ‘scaling-law’

Scaling law

We study empirical scaling laws for language model performance on the cross-entropy loss. The loss scales as a power-law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude. Other architectural details such as network width or depth have minimal effects within a wide range. Simple equations govern the dependence of overfitting on model/dataset size and the dependence of training speed on model size. These relationships allow us to determine the optimal allocation of a fixed compute budget. Larger models are significantly more sample-efficient, such that optimally compute-efficient training involves training very large models on a relatively modest amount of data and stopping significantly before convergence. The key findings for Transformer language models are:- Performance depends on scale, weakly on model shape: the number of model parameters N (excluding embeddings), the size of the dataset D, and the amount of compute C used for training. Has weak depends on other architectural hyperparameters such as depth and width.

- Smooth power laws: performance has a power-law relationship with each of the three factors N, D, C.

- Universality of overfitting: Performance improves predictably as long as we scale up N and D in tandem, but enters a regime of diminishing returns if either N or D is held fixed while the other increases. The performance penalty depends predictably on the ratio N^0.74/D, meaning that every time we increase the model size 8x, we only need to increase the data by roughly 5x to avoid a penalty

- Universality of training:Training curves follow predictable power-laws whose parameters are roughly independent of the model size. By extrapolating the early part of a training curve, we can roughly predict the loss that would be achieved if we trained for much longer.

- Transfer improves with test performance:(I did not understand).

- Sample efficiency(The amount of information an algorithm can get from samples. )</d-footnote>:(I did not understand).

- Convergence is inefficient: When working within a fixed compute budget C but without any other restrictions on the model size N or available data D, we attain optimal performance by training very large models and stopping significantly short of convergence .

- Optimal batch size:(I did not understand).

- Arithmetic reasoning increase when scaling-up but can still occasionally make mistakes.

- Commensense reasoning gradually increase with the growth of model size.

- Emergent ability

emergent abilities of LLMs are abilities that are not present in smaller-scale models but are present in large-scale models. : it is not predictable. Some emergent abilities are interesting, such as handling word manipulation, logical abilities etc. Research needed to understand more and the U-shaped Phenomenon

Miscellaneous tasks

- No use case:

LLMs performance in regression tasks has been less impressive. For example, ChatGPT’s performance on the GLUE STS-B dataset, which is a regression task evaluating sentence similarity, is inferior to a fine-tuned RoBERTa performance. LLMs’ performance on multimodal data, which involves handling multiple data types such as text, images, audio, video, actions, and robotics, remains largely unexplored. And fine-tuned multimodal models, like BEiT and PaLI, still dominate many tasks such as visual question answering (VQA) and image captioning. (This seems to be out dated informaiton.)

- Use case: mimichking humans such as ChatGPT, annotator and data generator for data augmentation, quality assessment on some NLG tasks.